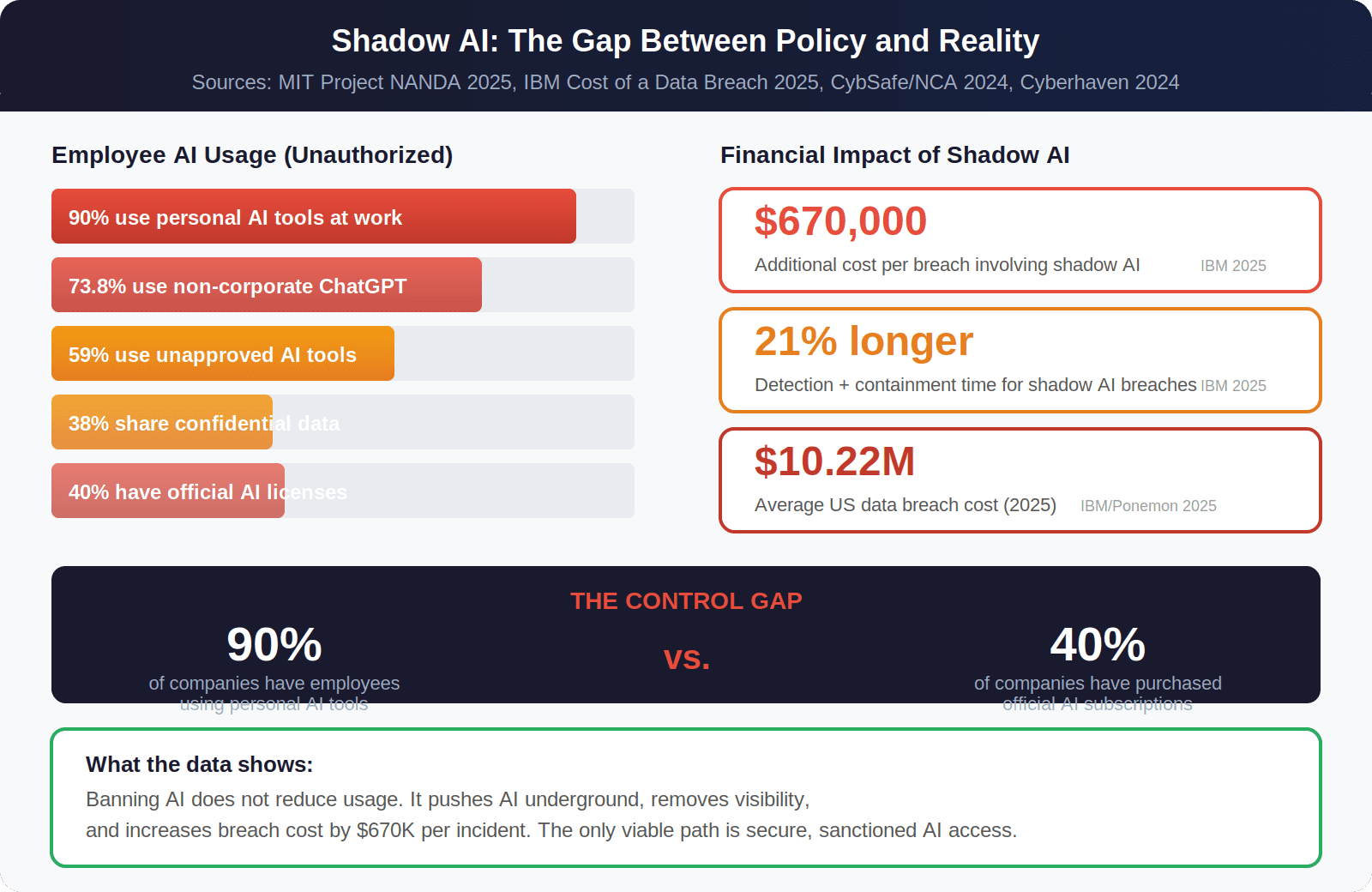

A 2025 study by MIT's Project NANDA found that employees in over 90% of companies regularly use personal AI tools for work. Only 40% of those companies have purchased official AI subscriptions. (MIT Project NANDA, State of AI in Business 2025, https://ide.mit.edu/)

That is not a prediction. It is a measurement of what is already happening inside your company right now. Your team members are pasting customer data into ChatGPT. They are feeding financial projections into Claude. They are running HR documents through Gemini. None of it goes through your security stack.

This is shadow AI, the single fastest-growing security risk in startups and mid-size companies. Shadow AI refers to any use of AI tools that happens outside your company's approved systems and security controls. The gap between how many employees use AI and how many companies have sanctioned AI access is where the risk lives.

This guide covers what shadow AI actually costs, why banning shadow AI fails every time, what data your team is already exposing, and how high-performing companies are addressing the shadow AI problem with architecture instead of policy.

What Is Shadow AI and Why Should Startups Care?

Shadow AI is the use of any AI tool or application inside a company without approval, monitoring, or support from IT or security teams. It includes employees using personal ChatGPT accounts, free-tier Claude subscriptions, Gemini, or any generative AI tool that the company has not vetted or sanctioned. It is the AI equivalent of shadow IT, but with significantly higher data exposure risk.

According to a 2024 survey of 7,000 employees by CybSafe and the National Cybersecurity Alliance (NCA), 38% of employees share confidential company data with AI platforms without any form of approval. (CybSafe & NCA, 2024, https://www.cybsafe.com/)

For startups, unauthorized AI usage is especially dangerous. Smaller teams mean fewer controls, less IT oversight, and higher stakes per data point. A single engineer pasting proprietary source code into a public AI model can expose trade secrets to competitors. A single HR manager running employee records through an unapproved tool can trigger GDPR or HIPAA violations.

The velocity of adoption is accelerating because employees see the productivity benefits immediately. Zendesk's CX Trends 2025 report found that unsanctioned AI usage in healthcare, manufacturing, and financial services surged more than 200% year over year. The tools are too useful and too accessible for policies alone to contain them.

The risk is compounded by the nature of how generative AI models process data. Consumer AI tools often retain input data for model training unless the user explicitly opts out. This means that every piece of confidential information entered into a personal ChatGPT account has the potential to persist in a system that your company does not control, audit, or have the ability to delete from.

The Samsung Incident: A $670,000 Warning for Every Startup Using Unsanctioned AI

In March 2023, Samsung engineers used ChatGPT for three separate work tasks: debugging proprietary source code, optimizing chip-testing sequences, and generating meeting notes from internal discussions. Each incident occurred within a 20-day window after Samsung allowed ChatGPT access internally. (Dark Reading, 2023, https://www.darkreading.com/)

None of these employees acted with bad intent. They used the fastest tool available to get their work done. But every prompt they entered became part of OpenAI's training data at that time. Samsung's proprietary semiconductor designs, internal meeting discussions, and confidential documentation were sitting on external servers.

Samsung responded by banning ChatGPT company-wide. The ban did not work. Unauthorized usage continued on personal devices and accounts. JPMorgan and Goldman Sachs imposed similar restrictions with similar results. Employees found workarounds because the productivity gains were too significant to abandon.

IBM's 2025 Cost of a Data Breach Report quantifies the financial damage. Organizations with high levels of unsanctioned AI activity pay an average of $670,000 more per breach than organizations with proper AI governance. These breaches also take 21% longer to detect and contain because security teams have zero visibility into the attack vector, no logs to trace, and no alerts to trigger. (IBM Cost of a Data Breach Report, 2025)

The average US data breach now costs $10.22 million according to the same IBM and Ponemon Institute report. Shadow AI adds $670,000 on top of that baseline because the breach involves tools and data flows that no one in the organization can see or audit. For a startup with limited cash reserves, a breach of this magnitude can be an extinction-level event.

Why Banning AI Tools Does Not Solve the Shadow AI Problem

Cyberhaven's 2024 research found that 73.8% of ChatGPT accounts used in the workplace are personal, non-corporate accounts that lack any enterprise security controls. For Gemini, that number is 94.4%. (Cyberhaven, 2024, https://www.cyberhaven.com/)

This is the core problem with prohibition. Banning AI on corporate networks does not stop usage. It pushes unauthorized usage onto personal devices, personal accounts, and personal networks where the company has zero visibility. The risk does not decrease. It becomes invisible.

Here is what happens when a company bans AI tools:

Employees switch to personal phones and tablets to access AI tools

They tether to personal hotspots or home wifi networks

Unauthorized AI usage becomes invisible to IT and security teams

Data leakage risk increases because there are no controls at all

The company loses productivity gains while keeping all the risk

A 2025 Cybernews survey of over 1,000 US employees confirmed this pattern: 59% admit to using unapproved AI tools for work. Among employees who already have access to company-approved AI tools, 85% still also use unapproved alternatives. The reason is consistent: the approved tools are slower, less capable, or more cumbersome than the consumer options. (Cybernews, 2025, https://cybernews.com/)

Banning AI is not a security strategy. It is an abdication of security responsibility. Every company that has tried prohibition has found the same result: unsanctioned usage goes underground and becomes harder to detect. The shadow AI problem gets worse, not better.

What Data Is Your Team Actually Exposing Through Shadow AI?

Shadow AI data exposure is not theoretical. Cyberhaven's analysis of enterprise AI usage tracked exactly what types of sensitive data employees feed into unsanctioned AI tools:

Source code: 50.8% goes to non-corporate AI accounts

R&D materials: 55.3% goes to non-corporate accounts

HR and employee records: 49% goes to non-corporate accounts

Legal documents: 82.8% goes to non-corporate accounts

Legal documents are the most exposed category in shadow AI data flows. This includes draft acquisition contracts, settlement agreements with confidential terms, and materials protected by attorney-client privilege. (Cyberhaven, 2024)

For startups handling investor data, customer PII, or proprietary IP, this exposure creates compliance violations under GDPR, HIPAA, SOC 2, and CCPA. Each regulatory framework treats unauthorized data sharing with third-party AI providers as a potential breach event. The risk is particularly acute for startups in fintech, healthtech, and any vertical that handles personally identifiable information.

A Komprise 2025 IT Survey found that 90% of IT leaders are worried about shadow AI, and nearly 80% report that their organizations have already experienced negative outcomes from unauthorized AI usage, including the leaking of sensitive data.

Why Shadow AI Hits Startups Harder Than Enterprises

Enterprise companies can absorb a shadow AI breach. They have legal teams, incident response protocols, cyber insurance, and cash reserves to manage the fallout. Startups do not. A single shadow AI data leak at a 10-person startup can derail a funding round, trigger customer churn, or result in regulatory penalties that consume months of runway.

Shadow AI risk is also harder to detect in startups. Enterprises deploy data loss prevention (DLP) tools, endpoint monitoring, and network traffic analysis to spot unauthorized AI usage. Most startups have none of these controls. The shadow AI activity is completely invisible until something goes wrong.

The asymmetry is stark: startups have the highest shadow AI exposure and the fewest resources to detect or recover from a shadow AI breach. This is why architecture-level solutions matter more for startups than for any other company type. You cannot hire your way out of the shadow AI problem with a 10-person team. You need the solution built into the tools your team already uses.

How Are High-Performing Companies Solving the Shadow AI Problem?

The pattern among companies that effectively manage unauthorized AI risk is consistent. They do three things:

1. Provide a Sanctioned AI Tool That Is Better Than the Unauthorized Alternative

Employees go rogue with AI because the approved alternative is either nonexistent, slow, or worse than ChatGPT's free tier. The fix is to give teams an AI tool that works inside your existing workflow, handles security automatically, and is easier to use than the unauthorized option. When the sanctioned tool is genuinely better, unsanctioned adoption drops.

2. Build AI Governance Into the Architecture

Policies that say 'do not use AI' are ignored. Architecture that isolates each employee's AI instance, encrypts all data in transit, and never trains on company inputs actually enforces compliance. Shadow AI persists when governance lives in a PDF. Unauthorized usage disappears when governance lives in the product.

3. Deploy AI Where the Data Risk Is Highest

HR, compliance, payroll, and reporting are the functions where employees are most likely to paste sensitive data into unauthorized AI tools. These are also the functions where AI can deliver the most value. Start AI deployment in these high-risk areas to intercept shadow AI usage before it happens.

Diana: Secure AI That Eliminates the Shadow AI Problem

Diana is built for exactly this problem. It is an AI employee that lives inside Slack and handles HR, ops, finance, compliance, and reporting within your existing security perimeter.

Every employee gets their own AI instance. No data is shared between instances. No data is used for model training. Everything runs on OpenClaw with enterprise-grade encryption. The result: your team gets the productivity gains of AI without any of the unauthorized usage risk. There is no reason for employees to use personal AI accounts when Diana provides faster, more capable, and fully secure access from the same Slack workspace they already use.

No new tools to learn. No portals to log into. Just message Diana like a teammate and the work gets done securely, completely, and automatically. Built by the team behind DianaHR. Backed by Y Combinator, General Catalyst, and SNR.

Frequently Asked Questions About Shadow AI

What is the average cost of a shadow AI data breach?

According to IBM's 2025 Cost of a Data Breach Report, breaches involving shadow AI cost organizations an average of $670,000 more than breaches involving sanctioned AI tools. The average US data breach overall costs $10.22 million.

What percentage of employees use unauthorized AI tools at work?

Multiple 2025 studies converge on similar numbers. MIT's Project NANDA found employees in over 90% of companies use personal AI tools. Cybernews found 59% of employees admit to using unapproved tools. Cyberhaven found 73.8% of workplace ChatGPT accounts are non-corporate.

Can banning ChatGPT prevent shadow AI risks?

No. Research shows that banning AI tools pushes unauthorized usage onto personal devices and accounts where IT has zero visibility. Samsung banned ChatGPT after its 2023 data leak, but unapproved usage continued. Providing a secure, sanctioned alternative is more effective than prohibition.

What types of company data do employees share through unsanctioned AI tools?

Cyberhaven's 2024 analysis found that 82.8% of legal documents, 55.3% of R&D materials, 50.8% of source code, and 49% of HR records entered into AI tools go through non-corporate accounts without enterprise security controls.

How can startups protect themselves from shadow AI?

The most effective approach combines three elements: providing a sanctioned AI tool that is better than free alternatives, building security into the AI architecture rather than relying on policy alone, and deploying AI first in high-risk operational areas like HR, compliance, and finance.

The Choice Is Not AI vs. No AI. It Is Visible AI vs. Invisible Risk.

Your employees are already using AI. The only question is whether they are using it through secure, visible channels or through invisible personal accounts that no one on your security team can monitor. Every week you operate without a sanctioned AI solution, you accumulate shadow AI risk that compounds. The longer you wait, the more data leaves your perimeter without a trace.

Get started with Diana today and give your team secure AI that works inside Slack. No shadow AI. No risk. Just results. dianaHR.com

Share the Blog on: